与「xAI」相关的搜索结果

xAI 贴吧

一个关键词就是一个贴吧,路径全站唯一。

用户

未找到

包含 xAI 的内容

今日 Web4 信息差:

1. 英伟达竞对 $CBRS IPO了!超额认购20x,开盘暴涨300%,现报 $311,有OpenAI、亚马逊大单

2. 特朗普北京行,被习警告,台湾问题处理不好就危险, H200芯片获批出口给中国大厂, $NVDA 两天暴涨10%!

3. 马斯克带儿子去旅游,却让 xAI 员工 007 加班,很多人受不了走了,预训练团队几乎归零

4. 风险偏好极高、风控几乎为零,25x杠杆的下场就是爆仓!麻吉大哥被迫减仓 $ETH 多单,清算价 $2,244 还差10刀爆

5. 币安 CMO Rachel 离职,一姐发 $币安人 最高到2.2m,30x,榜一浮盈 $3万,真假莫奈的 AI 偏见, $MONET 最高到440k,99x,榜一浮盈 $7k

显示更多

Grok Build is amazing.

The early beta just dropped for SuperGrok Heavy users and the first real feedback from developers is overwhelmingly positive.

People are saying it already feels 10x ahead of other coding agents. It handles full agentic workflows natively, runs multiple agents in parallel, does live refactoring, and has a surprisingly polished terminal UI with both vim mode and mouse support.

It’s fast, manages huge context cleanly, and actually feels like you’re working with a real autonomous coding partner instead of just getting suggestions.

This is the kind of serious high quality tool xAI keeps shipping. If the beta keeps this momentum, Grok Build is going to be a real great tool for power users.

Try it out right now at if you have SuperGrok Heavy subscription.

显示更多

xAI just released Grok Build CLI and it’s a game changer for developers

Grok Build is a powerful AI coding agent and CLI built for professional software engineering and complex coding workflows running directly in your terminal

With Grok Build CLI, you can:

- Plan and review tasks before execution with clean diffs

- Run multiple sub-agents in parallel for large projects

- Use headless mode for automation and scripting

- Seamlessly work with your existing tools and setups

This is xAI going all-in on giving power users real engineering tools

Currently in early beta and exclusively available for SuperGrok Heavy subscribers

If you’re on SuperGrok Heavy, you can start using it right now

Check it out here:

显示更多

Xiomi CEO is asking Elon Musk for a selfie… while building a billion dollar empire himself.

Lei Jun owns:

- Xiaomi

- Xiaomi smartphones

- Xiaomi smart home ecosystem

- Xiaomi IoT products

- Xiaomi wearables

- Xiaomi TVs

- Xiaomi EV division

Elon Musk owns:

- Tesla

- SpaceX

- xAI

- X (Twitter)

- Neuralink

- The Boring Company

Lei Jun focuses on:

- smartphones

- consumer electronics

- IoT ecosystem

- smart home devices

- EVs

Elon Musk focuses on:

- rockets

- AI

- robotics

- EVs

- brain tech

- social media

Lei Jun built one giant ecosystem company.

Elon Musk built multiple independent billion-dollar companies.

显示更多

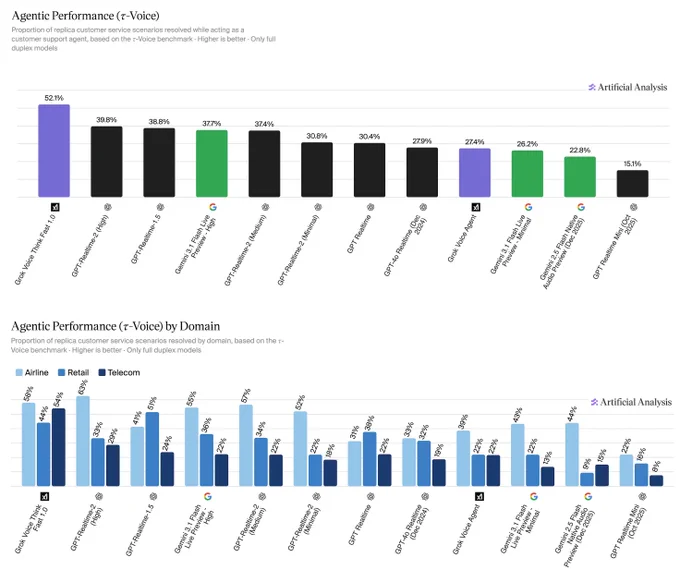

Grok Voice Think Fast 1.0 is officially the most well-rounded agentic voice AI on the market right now

It now ranks #1# in the latest τ-Voice agentic performance benchmarks in real-world tests on Artificial Analysis

The gap is massive. xAI is quietly taking over every other model by actually building for real-world use instead of just lab demos...

显示更多

Today is my last day at xAI.

I joined xAI a year ago and had the pleasure of leading the search and factuality post-training team. Over time, we developed so many recipe and engineering co-optimizations, making Grok the best AI for search and real-time agent. I am also particularly proud of working with a small group of talented people delivering the recent iterations of the instant mode of Grok - the one I personally liked and used the most.

My thanks to all the friends and teammates for their support and help over the past year. They are among the brightest minds I’ve met in my career. I am sure the team will continue the mission to make better Grok and understand the universe.

显示更多

半个标普500都坐进了同一架飞机

整个飞机加起来超过全球GDP前两大经济体之和

17个顶级CEO 华尔街+硅谷顶级阵容来袭

先看随行名单

黄仁勋(Nvidia 英伟达)

马斯克(Tesla / SpaceX / xAI)

库克(Apple)

拉里芬克(BlackRock 12万亿美元AUM)

波音CEO

高盛CEO

黑石CEO

花旗CEO

万事达CEO

高通CEO

美光CEO

还有川普的儿子Eric(以”个人身份”)

这不是政治代表团 这是一支移动的标普500

明面上:贸易 台湾 伊朗

暗线上:

AI芯片出口管制松绑(黄仁勋 高通 美光)

中国买美国飞机(波音)

中国买美国农产品(嘉吉)

中美投资委员会 中美贸易委员会(黑石 高盛 花旗)

数字支付互通(万事达 Visa)

AI模型合作(马斯克的xAI 库克的Apple Intelligence)

每一项都是几百亿美元起跳的生意

外交是给老百姓看的戏

真正的剧本 早就写在登机名单里了

普通人看川普访华 看的是中美友好

中产看川普访华 看的是关税涨跌

富人看川普访华 看的是谁在飞机上 谁不在

而那架飞机上 商界阵容比政府团队更显眼

这本身就是答案

显示更多

Announcing agentic performance benchmarking for Speech to Speech models on Artificial Analysis. We use 𝜏-Voice to measure tool calling and customer interaction voice agent capabilities in realistic customer service scenarios

Even the strongest Speech to Speech (S2S) models today resolve only about half of realistic customer service scenarios end-to-end - a meaningful gap relative to frontier text-based agents on the same tasks. Voice channels introduce significant complexity: challenging accents, background noise, and packet loss, all while requiring fast responses, consistency across long multi-turn conversations, and reliable tool use. Performance also varies considerably by audio condition: in clean audio some models perform notably better, but realistic conditions continue to pose a challenge. Conversation duration also varies meaningfully across models, with implications for both customer experience and operational cost.

About 𝜏-Voice:

Our Agentic Performance benchmark is based on 𝜏-Voice (Ray, Dhandhania, Barres & Narasimhan, 2026), which extends 𝜏²-bench into the voice modality to evaluate S2S models on realistic customer service tasks. It measures multi-turn instruction following, support of a simulated customer through a complete interaction, and tool use against simulated customer service systems. The simulated user combines an LLM-driven decision model with realistic audio synthesis: diverse accents, background noise, and packet loss modelled on real network conditions.

This complements our Big Bench Audio benchmark measuring intelligence and Conversational Dynamics (Full Duplex Bench subset) benchmark measuring conversational naturalness. Scores are the average of three independent pass@1 trials. We evaluate under realistic audio conditions using the 𝜏²-bench base task split across three domains:

➤ Airline (50 scenarios): e.g., changing a flight, rebooking under policy constraints

➤ Retail (114 scenarios): e.g., disputing a charge, processing a return

➤ Telecom (114 scenarios): e.g., resolving a billing issue, troubleshooting a service problem

Task success is determined by deterministic checks against expected actions and final database state, consistent with the 𝜏²-bench evaluator.

Key results:

xAI's Grok Voice Think Fast 1.0 is the clear leader at 52.1%, averaging 5.6 minutes per conversation, the second-longest overall. OpenAI's GPT-Realtime-2 (High) (39.8%, 3.0 min) and GPT-Realtime-1.5 (38.8%, 4.8 min) follow, with Gemini 3.1 Flash Live Preview - High close behind at 37.7% (3.8 min).

Speech to Speech is a fast evolving modality and we expect movement in rankings as we continue to add new models with these capabilities, and model robustness improves.

Congratulations @xAI @elonmusk! See below for further detail ⬇️

显示更多

xAI has rolled out Skills on You can create skills to perform actions on groks sandbox computer to edit files, use connectors, or whatever you can think of and they can easily be used in future queries.

Just say hey grok use the [skilll name] skill and do.....

You can also import skills.md files from other LLMs to easily import skills.

This is Great!

显示更多

Why did xAI hand over a 220,000-GPU cluster to Anthropic?

The technical backdrop to xAI's decision to hand Colossus 1 over to Anthropic in its entirety is more interesting than it appears. xAI deployed more than 220,000 NVIDIA GPUs at its Colossus 1 data center in Memphis. Of these, roughly 150,000 are estimated to be H100s, 50,000 H200s, and 20,000 GB200s. In other words, three different generations of silicon are mixed together inside a single cluster — a "heterogeneous architecture."

For distributed training, however, this configuration is close to a disaster, according to engineers familiar with the setup. In distributed training, 100,000 GPUs must finish a single step simultaneously before the cluster can advance to the next one. Even if the GB200s finish their computation first, the remaining 99,999 chips have to wait for the slower H100s — or for any GPU that has hit a stack-related snag — to catch up. This is known as the straggler effect. The 11% GPU utilization rate (MFU: the share of theoretical FLOPs actually realized) at xAI recently reported by The Information can be read as the numerical fallout of this problem. It stands in stark contrast to the 40%-plus MFU figures achieved by Meta and Google.

The problem runs deeper still. As discussed earlier, NVIDIA's NCCL has traditionally been optimized for a ring topology. It works beautifully at the 1,000–10,000 GPU scale, but once you push into the 100,000-unit range, the latency of data traversing the ring once around becomes punishingly long. GPUs need to churn through computations rapidly to keep MFU high, but while they sit waiting endlessly for data to arrive over the network fabric, more than half of the silicon falls into idle. Google sidestepped this bottleneck with its own custom topology (Google's OCS: Apollo/Palomar), but xAI, by my read, has not yet reached that stage.

Layer Blackwell's (GB200) "power smoothing" issue on top, and the picture comes into focus. According to Zeeshan Patel, formerly in charge of multimodal pre-training at xAI, Blackwell GPUs draw power so aggressively that the chip itself includes a hardware feature for smoothing power delivery. xAI's existing software stack, however, was optimized for Hopper and does not understand the characteristics of the new hardware; when it imposes irregular loads on the chip, the silicon physically destructs — literally melts. That means the modeling stack must be rewritten from scratch, which in turn means scaling is far harder than most of us imagine.

Pulling all of this together points to a single conclusion. xAI judged that training frontier models on Colossus 1 simply was not efficient enough to be worthwhile. It therefore moved its own training workloads wholesale onto Colossus 2, built as a 100% Blackwell homogeneous cluster. Colossus 1, on the other hand — whose mixed architecture is far less crippling for inference, which parallelizes more forgivingly — was leased in its entirety to an Anthropic that desperately needed inference capacity.

Many observers point to what looks like a contradiction: Elon Musk poured enormous capital into building Colossus, only to hand the core asset over to a direct competitor in Anthropic. Others read it as xAI capitulating because it is a "middling frontier lab." But these are surface-level reads.

Look at the numbers and a different picture emerges. xAI today holds roughly 550,000+ GPUs in total (on an H100-equivalent performance basis), and Colossus 1 (220,000 units) accounts for only about 40% of the total available capacity. Colossus 2 — built entirely on Blackwell — is already operational and continuing to expand. Elon kept the all-Blackwell homogeneous cluster (Colossus 2) for himself and leased out the older, mixed-generation Colossus 1. In other words, he handed the pain of rewriting the stack — the MFU-11% debacle — to Anthropic, while keeping his own focus on training the next generation of models.

The real point, then, is this. Elon's objective appears to be positioning ahead of the SpaceXAI IPO at a $1.75 trillion valuation, currently floated for as early as June. The narrative SpaceXAI now needs is that xAI — long the "sore finger" — is not merely a research lab burning cash, but a business with a "neo-cloud" model in the mold of AWS, capable of leasing surplus assets at high yields.

From a cost-of-capital perspective, an "AGI cash incinerator" is far less attractive to investors than a "data-center landlord generating cash."

As noted above, the most important detail of the Colossus 1 lease is that it is for inference, not training. Unlike training, inference requires far less tightly synchronized inter-GPU communication. Even when the chips are heterogeneous, the workload parcels out cleanly across them in parallel. The straggler effect — the chief weakness of a mixed cluster — is essentially neutralized for inference workloads.

Furthermore, with Anthropic occupying all 220,000 GPUs as a single tenant, the network-switch jitter (unanticipated latency) that arises under multi-tenancy disappears. The two sides' technical weaknesses end up complementing each other almost exactly.

One insight follows. As a training cluster mixing H100/H200/GB200, Colossus 1 was an asset that could only deliver an MFU of 11%. The moment it was handed over to a single inference customer, however, that asset transformed into a cash-flow asset rented out at roughly $2.60 per GPU-hour (a weighted average of the lease rates across GPU types). For xAI, what was a "cluster from hell" for training has become a "golden goose" minting $5–6 billion in annual revenue when redeployed for inference. Elon's genius, I would argue, lies not in the model but in this asset-rotation structure.

The weight of that $6 billion becomes clearer when set against xAI's income statement. Annualizing xAI's 1Q26 net loss yields roughly $6 billion in losses per year. The $5–6 billion in annual revenue generated by leasing Colossus 1 to Anthropic, in other words, almost perfectly hedges xAI's loss figure. This single deal effectively pulls xAI to break-even.

Heading into the SpaceXAI IPO, this functions as a core line of financial defense. From a cost-of-capital standpoint, if the image shifts from "research lab burning cash" to "infrastructure tollgate stably printing $6 billion a year," the entire tone of the offering can change.

(May 8, 2026, Mirae Asset Securities)

显示更多