与「FLASH」相关的搜索结果

FLASH 贴吧

一个关键词就是一个贴吧,路径全站唯一。

用户

未找到

包含 FLASH 的内容

If this isn't the sweetest thing ever ❤️ @Flash_Garrett

(via brklyn119/TT)

Gemini 3.2 Flash - Capitalizing on DeepMind's clever distillation techniques...

Rumors are that benchmarks show it's hitting 92% of GPT 5.5's performance on coding and reasoning tasks while being 15-20x cheaper on inference costs. The latency improvements are insane - sub-200ms for most queries.

Google's distillation + sparsity techniques are paying off massively. They've essentially compressed a frontier model into a flash variant without the usual quality cliff.

显示更多

今天硅谷一个创业者跟我说他用 DeepSeek V4 Flash 写代码,速度飞快效果还特别好,巨便宜。

DeepSeek V4 Pro 现在 easyrouter 上 25 折,还送 credit,试了一下确实可以 👇

显示更多

Another example of how insane a player @Flash_Garrett is 😳

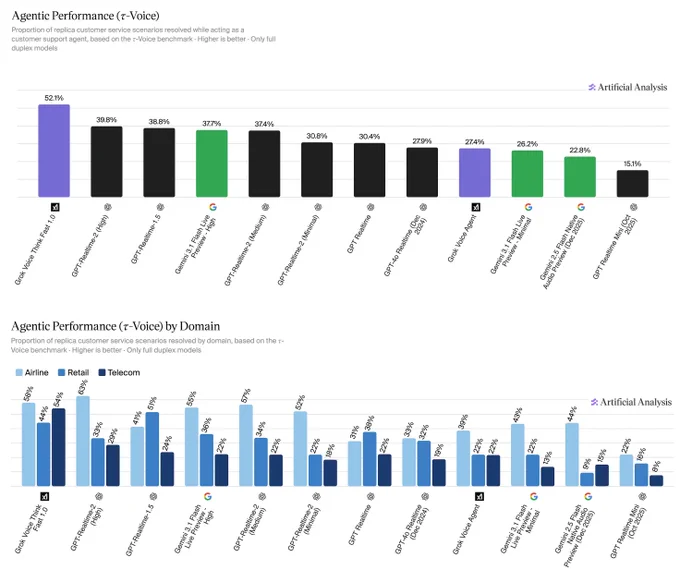

Grok Voice Think Fast 1.0 ranks #1# on the Artificial Analysis τ-Voice benchmark for real-world agentic customer service resolution

Absolutely outperforming GPT-Realtime-2 (High) and Gemini 3.1 Flash by a huge margin

That's a massive 12%+ lead over OpenAI's best model that just released a few days ago

Grok is running real-time background reasoning without the latency penalty, which is why it is already handling live Starlink phone operations autonomously at scale

显示更多

Announcing agentic performance benchmarking for Speech to Speech models on Artificial Analysis. We use 𝜏-Voice to measure tool calling and customer interaction voice agent capabilities in realistic customer service scenarios

Even the strongest Speech to Speech (S2S) models today resolve only about half of realistic customer service scenarios end-to-end - a meaningful gap relative to frontier text-based agents on the same tasks. Voice channels introduce significant complexity: challenging accents, background noise, and packet loss, all while requiring fast responses, consistency across long multi-turn conversations, and reliable tool use. Performance also varies considerably by audio condition: in clean audio some models perform notably better, but realistic conditions continue to pose a challenge. Conversation duration also varies meaningfully across models, with implications for both customer experience and operational cost.

About 𝜏-Voice:

Our Agentic Performance benchmark is based on 𝜏-Voice (Ray, Dhandhania, Barres & Narasimhan, 2026), which extends 𝜏²-bench into the voice modality to evaluate S2S models on realistic customer service tasks. It measures multi-turn instruction following, support of a simulated customer through a complete interaction, and tool use against simulated customer service systems. The simulated user combines an LLM-driven decision model with realistic audio synthesis: diverse accents, background noise, and packet loss modelled on real network conditions.

This complements our Big Bench Audio benchmark measuring intelligence and Conversational Dynamics (Full Duplex Bench subset) benchmark measuring conversational naturalness. Scores are the average of three independent pass@1 trials. We evaluate under realistic audio conditions using the 𝜏²-bench base task split across three domains:

➤ Airline (50 scenarios): e.g., changing a flight, rebooking under policy constraints

➤ Retail (114 scenarios): e.g., disputing a charge, processing a return

➤ Telecom (114 scenarios): e.g., resolving a billing issue, troubleshooting a service problem

Task success is determined by deterministic checks against expected actions and final database state, consistent with the 𝜏²-bench evaluator.

Key results:

xAI's Grok Voice Think Fast 1.0 is the clear leader at 52.1%, averaging 5.6 minutes per conversation, the second-longest overall. OpenAI's GPT-Realtime-2 (High) (39.8%, 3.0 min) and GPT-Realtime-1.5 (38.8%, 4.8 min) follow, with Gemini 3.1 Flash Live Preview - High close behind at 37.7% (3.8 min).

Speech to Speech is a fast evolving modality and we expect movement in rankings as we continue to add new models with these capabilities, and model robustness improves.

Congratulations @xAI @elonmusk! See below for further detail ⬇️

显示更多

🔐Solar收音台:聊聊DeFi安全,拉响链上警报

复盘Drift、AAVE等近期DeFi被盗事件,@DrPayFi 也来亲述昨晚 @humafinance v1 协议的漏洞攻击事件。身处黑暗森林,项目方如何以后规避类似事件的发生?用户怎样甄别协议风险,保护好自己的资产?本期邀请了安全专家和DeFi项目方们,从不同角度出发一起聊聊

⏰5月13日 周三9pm HKT

🧑🏫主持人:@day1globalpod Co-founder @starzq

嘉宾:@humafinance Co-founder @DrPayFi

@TradeNeutral @Neutraltrade_CN Founder Jared

@zerodriftsec Founder @Normanrockon

@FlashRescue Co-founder Darcy

显示更多

OpenCode x DeepSeek V4 Flash - free for a limited time

DeepSeek V4 Flash is currently our most popular model in Go

Give it a try if you haven’t already

🚨SlowMist TI Alert🚨

💸 Loss: 140,180 USDT (140,180,175,562 tokens)

🔍 Root Cause: Missing access control in addUsers (0x4777ff62) function of PayrollDistribution. Anyone can register users for existing drop and set arbitrary totalAmount.

📌 Attacker: 0x90b147592191388e955401af43842e19faa87ee2

📌 Victim: 0xa184af4b1c01815a4b57422a3419e4fb78a96ee4

📌 Vulnerable Contract: 0xef2c77f3b9b8aaa067239bc6b4588bae26433494

Attacker registered exploit contract via addUsers in constructor, flash loaned USDT deposit, claimed oversized payroll from drop #3#.

Powered by #SlowMist#.AI

显示更多

谷歌发布了Gemini 3.1 Flash-Lite,继Flash又Lite的,主打低延迟和低价,我看了下,价格也就比现在的DeepSeek V4 Pro略微便宜一丢丢,远远高于DeepSeek V4 Flash。

显示更多